News & Stories

Siri manual assessment staff listens to 1000 recordings every day

Recently, the Irish media Irish Examiner reported on the details of the Apple Siri manual evaluation program. According to one of the contractor employees, they organize and analyze up to 1,000 Siri recordings every day.

The employee, who previously worked for Ireland’s GlobeTech data analytics company, said that Apple would share the recordings collected by Siri Voice Assistant to these contractors. Most of these recordings were just a few seconds of voice commands, but occasionally some private ones were heard. A discussion of the data or a segment of the conversation.

The daily work of employees is to classify and study various recordings, such as whether Siri is accidentally activated, whether the user’s questions can be correctly answered and whether the content is appropriate.

At the same time, every employee responsible for Siri’s manual analysis will be required to sign a confidentiality agreement before entering the job, prohibiting the disclosure of the details of the work, or that they are working for Apple.

The author also pointed out that although the recordings of each Siri user are anonymous, they can still distinguish the accent of the speech, such as most users with Canadian, Australian or British accents, and a small team dedicated to Europe. Content in other languages.

After the British “Guardian” exposed the incident last month, GlobeTech began to prohibit employees from carrying mobile phones at work, and then Apple announced that it would suspend the Siri manual evaluation program, and these employees were immediately terminated by the company.

In an official statement in early August, Apple said it would suspend listening to its user audio on Siri and will let users choose whether to participate in the project when they update the software in the future; in an earlier statement, Apple This means that these Siri conversations are analyzed in a secure environment and the uploaded data is less than 1% of the total Siri data.

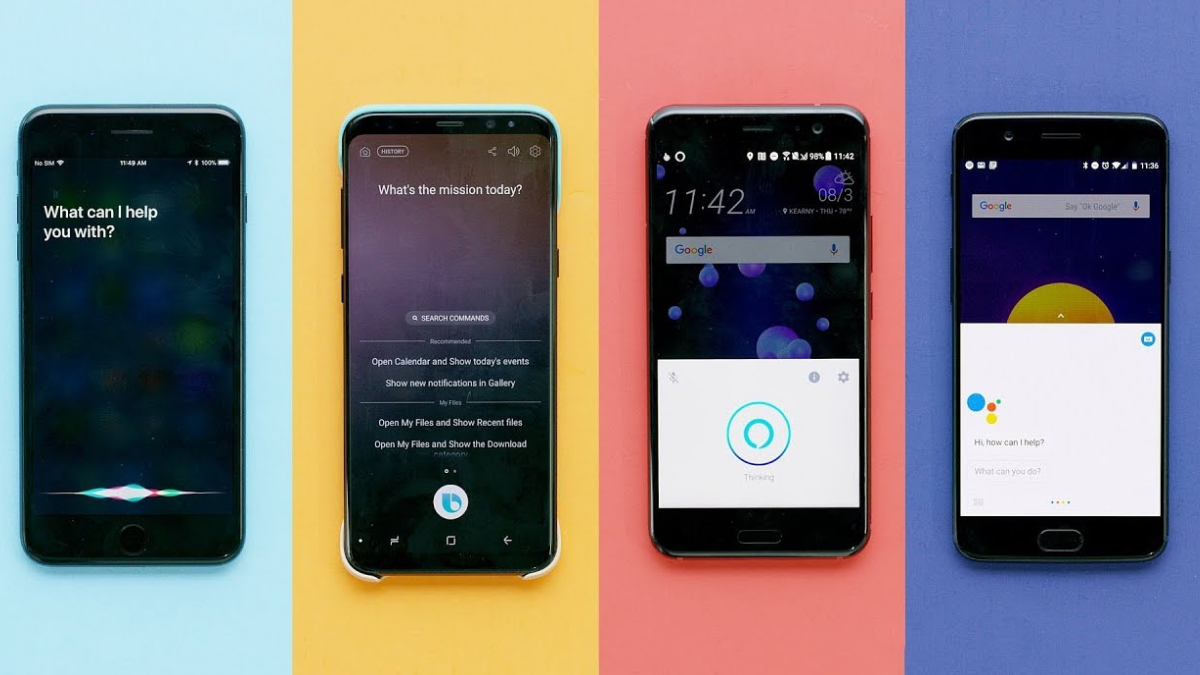

In fact, Apple is not the first company to be pointed out to listen to users’ voice content, including Amazon Alexa, Microsoft Cortana, and Google Voice Assistant. Almost all of them have the behavior of capturing user speech and analyzing. The goal is to rely on manual Means to train and improve the understanding of voice assistants and improve their responsiveness.

After Apple’s incident was exposed, Google also chose to suspend the listening and transcription capabilities of the Google Voice Assistant in Europe.

But for Apple, which regards personal privacy as one of the selling points of the product, a similar situation will obviously suffer more public opinion and controversy. At the same time, this incident also proves the limitations of current voice assistants on “smart” services.

In theory, if a voice assistant is smart enough, the work of analyzing voice can be done to the machine or locally, without actually uploading the recording and performing manual analysis.

The employee also said that he understands why Apple is doing this, but he added that Apple did not inform users of the possibility of Siri voice being uploaded and analyzed. Apple’s own privacy policy has never been mentioned, so the incident After the exposure, it caused a lot of user dissatisfaction.

“Uploading users’ private content without consent, I think this is the root cause of the incident,”

Apple Employee

News & Stories

How to start earning $1 million in 3 months through shopify

“Shopify is better than any other platform we’ve played with, and we’ve played with them all

First you build your shopify store

- Choose an ecommerce platform.

- Add the products you want to sell.

- Create key pages for your store.

- Pick a theme and customize your online store.

- Customize your shipping settings.

- Configure your tax settings.

- Set up your payment gateway and payouts.

- Prepare your store for launch.

1. Choose an ecommerce platform

An ecommerce platform lets you build and start an online store experience, make sales, and fulfill orders. Most people think an ecommerce platform is like a website builder you simply list new products and accept payments online. But they can do so much more than that.

Your ecommerce platform acts as the control center for your entire business, controlling everything from inventory to marketing, giving you all the tools you need to sell online and provide customer support.

Choosing a right product for your business

- Based on supplier reputation. If you choose products from a supplier you know has good quality products, then your customers are more likely to be happy with their purchase. …

- Based on market trends. …

- Based on fulfilling a unique need. …

- Based on a demographic. …

- Based on what you would buy.

Start PPC campaign through social media and google reach millions of buyer for your product and start earning millions of dollars…………

Find Built-In Tools That Help You Create, Execute & Analyze Digital Marketing Campaigns. We Help Reduce the Barriers To Business Ownership To Make Commerce Better For Everyone. Unlimited 24/7 Support for you

News & Stories

How Facebook users make money from Facebook app

The good news? Facebook is rolling out new and exciting ways to make money, largely aimed at entrepreneurs and creators with Facebook followings. Whether you’re looking to make some extra money on the side or find more customers for your existing business, here are six ways you can monetize your Facebook audience in 2022, plus the requirements you’ll need to adhere to in order to do it

#1 Make money through Facebook stars

Facebook Stars is a feature that allows you to monetise your video content, photo posts and text posts. Viewers can buy Stars and send them to you while you’re live or for on-demand videos that have Stars enabled. For every Star you receive, Facebook will pay you USD 0.01.

#2 Facebook Stars Payouts to get money

To receive payouts for Facebook Stars, you must have a payout account set up. This is done during the setup process for stars

Please note that we only send payments when your total balance reaches at least USD 100 or 10,000 Stars. Payouts may be delayed in cases where suspicious or fraudulent activity is detected. International payments may take up to several days to arrive in your bank account, depending on your banking institution’s processes.

Note: For US creators, you will receive your Stars payout after you’ve crossed the minimum balance of USD 25.

#3 Level Up and Stars earnings

Facebook can only pay out Stars earnings in countries where the gaming Level Up programmed is available. You can find the list of eligible countries here. If you submit bank account information outside of these countries, you will not be able to monetized.

4# Payout schedule for creators

Facebook Stars payouts will be issued to your account approximately 21 days after the end of the month in which Stars were received. For example, Stars earnings you receive in June will be paid out in July.

Each month, you can expect to receive:

- An invoice that will include earnings from the previous month’s pay period.

- A payment as long as you made at least USD 100 (USD 25 for US creators) in a given month or from your cumulative months’ earnings. For example, if you make USD 50 in April and USD 80 in May, you’ll be paid in June.

5# More different ways to make money on Facebook

Create videos with in-stream ads

Include ads in your videos.

In-stream ads help you earn money by including short ads before, during or after your videos. We automatically identify natural breaks in your content to place your ads, or you can choose your own placements. Your earnings are determined by things such as number of video views and who the advertisers are.

6# Fans subscriptions

Add a paid subscription to Pages.

Fan subscriptions allow the audience that cares most about your Page to directly fund it through monthly, recurring payments that you set. Identify supporters by the special badge we provide them in comments, and reward them with perks such as exclusive content and discounts.

7# Branded contents

Generate revenue by publishing content that features or is influenced by a business partner. Brands want to work with content creators and their audiences. To make this easier, safer and more impactful for both parties, we created a tool, the Brand Collabs Manager, which enables you to find and connect with each other.

8# Subscriptions groups

Add a paid membership to groups.

Subscription groups empower group admins to sustain themselves through subscriptions, thus enabling them to further invest in their communities

9# Earn money from your live video.

Take your live streams to the next level. Stars help you earn money for connecting with fans during your live videos. Fans can buy and send Stars, a virtual good, in the comments of a live video, and you earn one cent for every Star that you receive. Stars are a fun way for fans to express themselves and show you support in the comments of a video.

10# Check your Facebook Monetization Eligibility

There are a handful of ways to make money from your Facebook content, but first you must be eligible to do so. This means your Facebook page and the content you post on it must abide by the platform’s eligibility requirements, which are grouped into three categories:

- Facebook Community Standards: these are the platform’s foundational rules, such as no graphic or unsafe content

- Partner Monetization Policies: these rules are for your Facebook page as a whole, as well as the content you create, how you share your content, and how you receive and make online payments

- Content Monetization Policies: these are content-level rules that apply for every piece of content you post, such as no violent or profane content

News & Stories

Huawei Mate XS Specifications, Usage, & Prices

Read Huawei Mate XS phone details, prices, specifications, product quick start guide, user guide, and reviews.

| NETWORK | |

|---|---|

| Technology | GSM/HSPA/LTE/5G |

| 2G bands | GSM 850 / 900 / 1800 / 1900 – SIM 1 & SIM 2 |

| 3G bands | HSDPA 800 / 850 / 900 / 1700(AWS) / 1900 / 2100 |

| 4G bands | LTE band 1(2100), 2(1900), 3(1800), 4(1700/2100), 5(850), 6(900), 7(2600), 8(900), 9(1800), 12(700), 17(700), 18(800), 19(800), 20(800), 26(850), 28(700), 32(1500), 34(2000), 38(2600), 39(1900), 40(2300), 41(2500) |

| 5G | 5G band 1(2100), 3(1800), 28(700), 38(2600), 41(2500), 77(3700), 78(3500), 79(4700); SA/NSA |

| Speed | HSPA 42.2/5.76 Mbps, LTE-A Cat21 1400/200 Mbps, 5G (2+ Gbps DL) |

| BODY | Dimensions | Unfolded: 161.3 x 146.2 x 5.4 mm Folded: 161.3 x 78.5 x 11 mm |

|---|---|---|

| Weight | 300 g (10.58 oz) | |

| Build | Plastic front, aluminum back, aluminum frame | |

| SIM | Hybrid Dual SIM (Nano-SIM, dual stand-by) |

| DISPLAY | Type | Foldable OLED capacitive touchscreen, 16M colors |

|---|---|---|

| Size | 8.0 inches, 205.0 cm2 (~86.9% screen-to-body ratio) | |

| Resolution | 2200 x 2480 pixels (~414 ppi density) | |

| Folded cover display: 6.6″, AMOLED, 1148 x 2480 pixels (19.5:9) |

| PLATFORM | OS | Android 10.0; EMUI 10.0 |

|---|---|---|

| Chipset | HiSilicon Kirin 990 5G (7 nm+) | |

| CPU | Octa-core (2×2.86 GHz Cortex-A76 & 2×2.36 GHz Cortex-A76 & 4×1.95 GHz Cortex-A55) | |

| GPU | Mali-G76 MP16 |

| MAIN CAMERA | Quad | 40 MP, f/1.8, 27mm (wide), 1/1.7″, PDAF 8 MP, f/2.4, 52mm (telephoto) 16 MP, f/2.2, 17mm (ultrawide) TOF 3D, (depth) |

|---|---|---|

| Features | Leica optics, dual-LED dual-tone flash, panorama, HDR | |

| Video | 2160p@30fps, 1080p@30fps |

| SELFIE CAMERA | No – uses main camera |

|---|

| SOUND | Loudspeaker | Yes |

|---|---|---|

| 3.5mm jack | No |

| COMMS | WLAN | Wi-Fi 802.11 a/b/g/n/ac, dual-band, Wi-Fi Direct, hotspot |

|---|---|---|

| Bluetooth | 5.0, A2DP, LE | |

| GPS | Yes, with dual-band A-GPS, GLONASS, BDS, GALILEO, QZSS | |

| NFC | Yes | |

| Infrared port | Yes | |

| Radio | No | |

| USB | 3.1, Type-C 1.0 reversible connector |

| FEATURES | Sensors | Fingerprint (side-mounted), accelerometer, gyro, proximity, compass, barometer |

|---|

| BATTERY | Non-removable Li-Po 4500 mAh battery | |

|---|---|---|

| Charging | Fast charging 55W, 85% in 30 min (advertised) Huawei SuperCharge |

News & Stories

Huawei Spent Rp3.6 Trillion to Build a 5G Factory in France

Huawei will spend more than 200 million pounds (approximately Rp. 3.6 trillion) to buy land and set up a new factory in France.

Huawei announced on Thursday (2/27/2020), it will spend more than 200 million pounds (approximately Rp. 3.6 trillion) to buy land and set up a new factory in France.

Reported by Zdnet , the plant will reportedly be used by Huawei to manufacture 4G and 5G equipment, especially for sales in the European market.

“As one of the most advanced manufacturing centers in the world, France has a mature industrial infrastructure and a pool of highly educated workers, and its geographical position is ideal for Huawei,” Huawei said.

The Chinese telecommunications giant said the facility would provide 500 jobs. This plant will also create products worth 1 billion pounds per year.

“This plant will add Huawei’s value chain in Europe, increasing the timeliness and reliability of Huawei delivery to European customers,” added Huawei.

News & Stories

Hurdles in 5G, CEO of Nokia Rajeev Suri Resigns

Rajeev Suri resigned from Nokia’s CEO after a series of disappointments related to 5G products and setbacks in China, Lightreading cited .

Rajeev Suri resigned from Nokia’s CEO after a series of disappointments related to 5G products and setbacks in China, Light reading cited.

Kati Pohjanpalo ( @pohjanka ), via tweeter, “Nokia is replacing CEO Rajeev Suri as it seeks to make up lost ground to Huawei and Ericsson in the race for 5G networks.”

His position will be replaced by Pekka Lundmark, President, and CEO of the energy company Fortum. The company announced the information in a statement today.

News of Rajeev Suri’s resignation follows reports that Nokia is considering various strategic options including the possibility of asset sales or mergers. Nevertheless, the information was completed by chairman Risto Siilasmaa during a press conference about Suri’s departure.

Reportedly, the share price lost more than a third last year and fell 5.5% in Helsinki on Friday.

Suri will not leave his role until August 31, according to Nokia’s statement and he will remain as a strategic adviser to Nokia’s board until January 2021.

He is remembered as the person who brought Nokia to take over $ 17.3 billion from rival Alcatel-Lucent. The big deal establishes Nokia as one of the three largest network equipment vendors in the world, along with Huawei China and Ericsson Sweden.

You must be logged in to post a comment Login